KotlinYOLO

Overview

KotlinYOLO is a real-time object detection system built for Android-based smart glasses,

focusing on efficient on-device inference using YOLO models in ONNX format.

The project explores the practical limits of edge AI deployment on constrained wearable hardware,

balancing latency, thermal constraints, and usability.

Key Features

- Real-time object detection using YOLOv8n / YOLO11n models

- ONNX Runtime integration using Java/Kotlin APIs

- CameraX pipeline with dynamic resolution handling

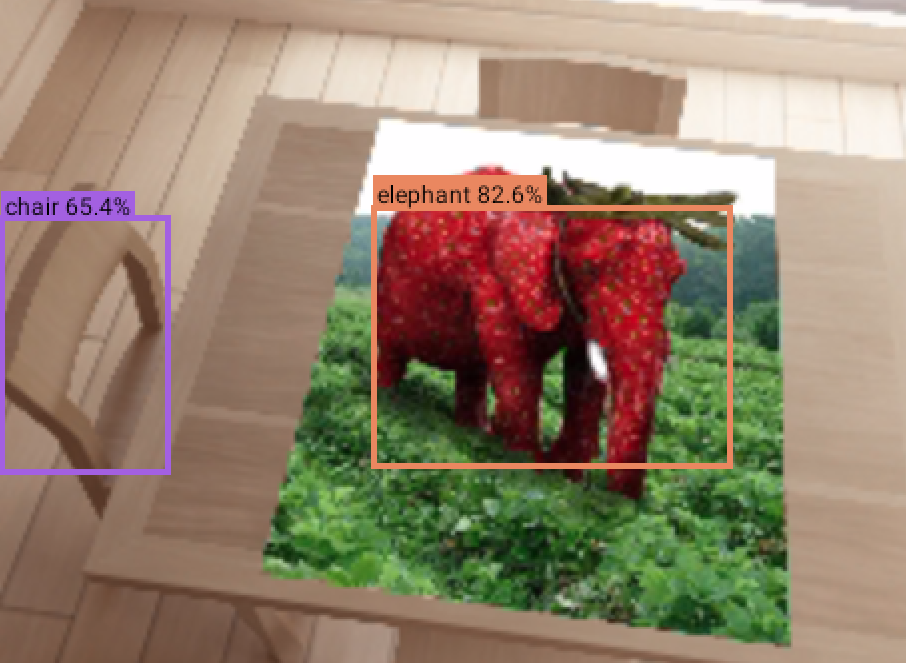

- Bounding box rendering on live camera feed

- Support for Vuzix M400 and Blade 2 devices

Architecture

The system follows a lightweight pipeline:

- Camera frame acquisition via

CameraX - Preprocessing into 640×640 tensor format

- ONNX model inference using

OrtSession - Post-processing (NMS, bounding box scaling)

- Overlay rendering to display detections

Technical Challenges

- Achieving usable FPS on low-power wearable hardware

- Handling aspect ratio mismatch without distortion

- Minimising memory allocations in real-time loops

- Balancing CPU vs GPU execution paths in ONNX Runtime

What I Learned

- Practical constraints of edge AI vs cloud inference

- Android performance optimisation at the system level

- Trade-offs between model size, accuracy, and latency

- Designing systems for real-world hardware limitations

Future Work

- Segmentation model support (YOLOv8-seg)

- Hardware acceleration improvements

- Server-assisted inference fallback

- Integration into production AR workflows